Matt Stansberry writes on Uptime’s seminar giving warning on the use of PUE.

Uptime warns data center pros against being benchmarked on PUE

Posted by: Matt StansberryData Center Metrics, DataCenter, Green data center

Uptime Institute executive director Ken Brill warned panelists at an online seminar today to be wary of very low Power Usage Effectiveness (PUE) ratios touted by some data center operators. “If your management begins to benchmark you against someone else’s data center PUE, you need to be sure what you’re benchmarking against,” Brill said.

Brill said he’s seen companies talking about a PUE of 0.8 — which is physically impossible. “There is a lot of competitive manipulation and gaming going on,” Brill said. “Our network members are tired of being called in by management to explain why someone has a better PUE than they do.”

If you’re going to compare your PUE against another company, you need to know what the measurement means. “You need to know what they’re saying and what they’re not saying,” Brill said. “Are you going to include the lights and humidification system? If you’re using free cooling six months of the year, do you report your best PUE?”

Matt was nice enough to send me this link and ask what I thought.

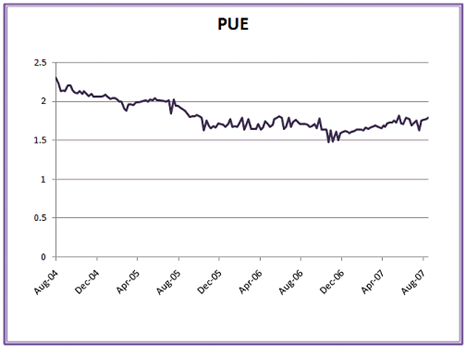

Here is a simple fix to the problem. PUE should be reported as a range of #’s low to high, and the average calculated over a period of time. This could be a graph. For example, Microsoft shows their PUE with this graph.

This graph shows 3 years of history and how the #’s have fluctuated. This graph is credible. A static PUE # has little meaning as it is just one data point with no background.

I’ve written about this issue before that PUE is dynamic.

I've been meaning to write about PUE, and have been stumped in that It is defined as a metric, and in the Green Grid document referenced it makes no reference that is dynamic. In reality PUE will be a dynamic # that changes as the load changes in a room. How ironic would it be that your best PUE # is when all the servers are running at near capacity, and shutting down servers to save power will increase your PUE? Or your energy efficient cooling system uses large amounts of water in Southern California where it is just a matter of time before water shortages will cause more environmental issues?

What helped me to think of PUE as a dynamic # is to think of it as quality control metric. The quality of the electrical and mechanical systems and their operations over time are inputs into PUE. As load changes and servers will be turned off the variability of the power and cooling systems influence you PUE. So, PUE can now have a statistical range of operation given the conditions. This sounds familiar. It's statistical process control.

Statistical Process Control (SPC) is an effective method of monitoring a process through the use of control charts. Much of its power lies in the ability to monitor both process centre and its variation about that centre. By collecting data from samples at various points within the process, variations in the process that may affect the quality of the end product or service can be detected and corrected, thus reducing waste and as well as the likelihood that problems will be passed on to the customer. With its emphasis on early detection and prevention of problems, SPC has a distinct advantage over quality methods, such as inspection, that apply resources to detecting and correcting problems in the end product or service.

At Data Center Dynamics Seattle, Microsoft’s Mike Manos said the average PUE for Microsoft data centers is 1.6, and his team is driving for 1.3 in 2 years. When Mike hits a PUE of 1.3 I am sure he’ll show us a graph to prove Microsoft has hit it.