Amazon Web Services has a post on the Economics of AWS.

The Economics of AWS

For the past several years, many people have claimed that cloud computing can reduce a company's costs, improve cash flow, reduce risks, and maximize revenue opportunities. Until now, prospective customers have had to do a lot of leg work to compare the costs of a flexible solution based on cloud computing to a more traditional static model. Doing a genuine "apples to apples" comparison turns out to be complex — it is easy to neglect internal costs which are hidden away as "overhead".

We want to make sure that anyone evaluating the economics of AWS has the tools and information needed to do an accurate and thorough job. To that end, today we released a pair of white papers and an Amazon EC2 Cost Comparison Calculator spreadsheet as part of our brand new AWS Economics Center. This center will contain the resources that developers and financial decision makers need in order to make an informed choice. We have had many in-depth conversations with CIO's, IT Directors, and other IT staff, and most of them have told us that their infrastructure costs are structured in a unique way and difficult to understand. Performing a truly accurate analysis will still require deep, thoughtful analysis of an enterprise's costs, but we hope that the resources and tools below will provide a good springboard for that investigation.

The AWS team has laid out the costs of AWS Cloud vs. owned IT infrastructure.

The Economics of the AWS Cloud vs. Owned IT Infrastructure. This paper identifies the direct and indirect costs of running a data center. Direct costs include the level of asset utilization, hardware costs, power efficiency, redundancy overhead, security, supply chain management, and personnel. Indirect factors include the opportunity cost of building and running high-availability infrastructure instead of focusing on core businesses, achieving high reliability, and access to capital needed to build, extend, and replace IT infrastructure.

The Economics of the AWS Cloud vs. Owned IT Infrastructure. This paper identifies the direct and indirect costs of running a data center. Direct costs include the level of asset utilization, hardware costs, power efficiency, redundancy overhead, security, supply chain management, and personnel. Indirect factors include the opportunity cost of building and running high-availability infrastructure instead of focusing on core businesses, achieving high reliability, and access to capital needed to build, extend, and replace IT infrastructure.

If you have every wished for a spreadsheet to help you calculate data center costs, AWS has this.

The Amazon EC2 Cost Comparison Calculator is a rich Excel spreadsheet that serves as a starting point for your own analysis. Designed to allow for detailed, fact-based comparison of the relative costs of hosting on Amazon EC2, hosting on dedicated in-house hardware, or hosting at a co-location facility, this detailed spreadsheet will help you to identify the major costs associated with each option. We've supplied the spreadsheet because we suspect many of our customers will want to customize the tool for their own use and the unique aspects of their own business.

The Amazon EC2 Cost Comparison Calculator is a rich Excel spreadsheet that serves as a starting point for your own analysis. Designed to allow for detailed, fact-based comparison of the relative costs of hosting on Amazon EC2, hosting on dedicated in-house hardware, or hosting at a co-location facility, this detailed spreadsheet will help you to identify the major costs associated with each option. We've supplied the spreadsheet because we suspect many of our customers will want to customize the tool for their own use and the unique aspects of their own business.

And, they launched an Economics Center.

The AWS Economics Center provides access to information, tools, and resources to compare the costs of Amazon Web Services with IT infrastructure alternatives. Our goal is to help developers and business leaders quantify the economic benefits (and costs) of cloud computing.

Overview

Amazon Web Services (AWS) gives your business access to compute, storage, database, and other in-the-cloud IT infrastructure services on demand, charging you only for the resources you actually use. With AWS you can reduce costs, improve cash flow, minimize business risks, and maximize revenue opportunities for your business.

- Reduce costs and improve cash flow.

Avoid the capital expense of owning servers or operating data centers by using AWS’s reliable, scalable, and elastic infrastructure platform. AWSallows you to add or remove resources as needed based on the real-time demands of your applications. You can lower IT operating costs and improve your cash flow by avoiding the upfront costs of building infrastructure and paying only for those resources you actually use.

- Minimize your financial and business risks.

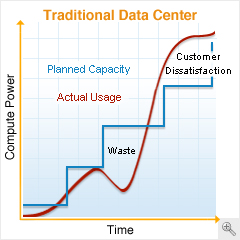

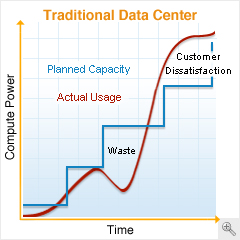

Simplify capacity planning and minimize both the financial risk of owning too many servers and the business risk of not owning enough servers by usingAWS’s elastic, on-demand cloud infrastructure. SinceAWS is available without contracts or long-term commitments and supports multiple programming languages and operating systems, you retain maximum flexibility. And for many businesses, the security and reliability of the AWS platform often exceeds what they could develop affordably on their own.

- Maximize your revenue opportunities.

Maximize your revenue opportunities with AWS by allocating more of your time and resources to activities that differentiate your business to your customers – instead of focusing on IT infrastructure “heavy lifting.” Use AWS to provision IT resources on-demand within minutes so your business’s applications launch in days instead of months. UseAWS as a low-cost test environment to sample new business models, execute one-time projects, or perform experiments aimed at new revenue opportunities.

Capacity vs. Usage Comparison

This last graph is the Christmas wish list for enlightened green IT thinkers. IT load that tracks to demand.