Google is rumored close to purchasing 111 8th Ave.

Google Near Purchase of NYC Landmark Building at 111 Eighth Ave.BySAM GUSTINPosted 6:22 PM 10/27/10

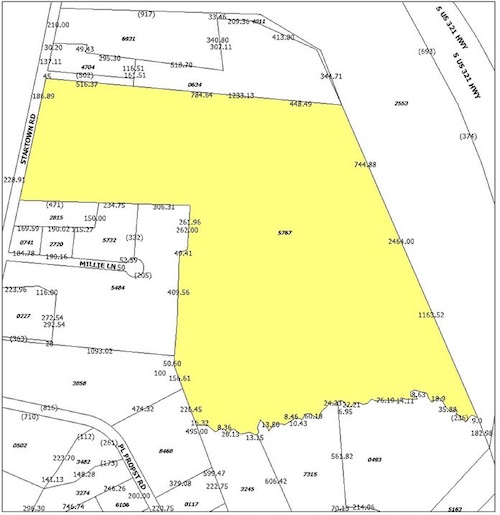

Last month,we told you that the gargantuan 111 Eighth Ave., a building which occupies an entire city block in Chelsea, and which is home to Google's (GOOG) New York headquarters -- is for sale.

Now, it appears that the likely buyer is none other than Google itself. Rumored sale price? A cool $2 billion,accordingto theNew York Post. 111 Eighth Ave. is the former Port Authority headquarters and one of the city's largest buildings, at nearly 3 million square feet.

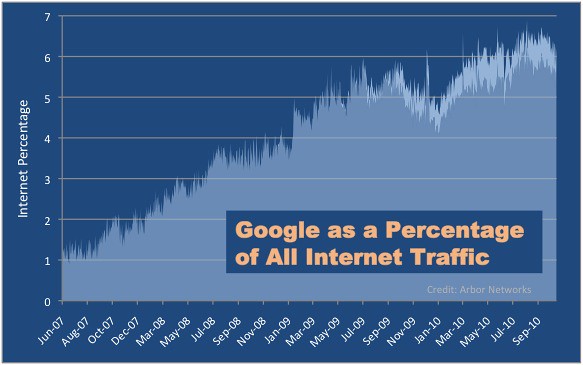

It also happens to be one of the East Coast's key "telecom hotels" -- centralized locations where groups of communications and networking firms hook up their hardware. Google is already the largest tenant, leasing 500,000 square feet over three floors.

NYDaily says the price may go as high as $2.9 Billion.

Google reportedly to pay four fold increase on $2.9 billion 111 Eighth Avenue building

BY NICOLE CARTER

DAILY NEWS STAFF WRITERWednesday, October 27th 2010, 5:22 PM

111eigth.com

The building is reportedly the fourth largest office building in the City.

Google is apparently ogling Chelsea’s 111 Eighth Ave. building ... for a mind-blowing $2.9 billion.

Given its carrier hotel status this could be Google’s most expensive data center asset.

Google reportedly already rents 550,000 square feet of space in the building. Because the building is equipped for high-tech businesses, other interested buyers are plenty and include foreign sheiks and wealthy locals, the Observer reports.