Just interviewed Reed Smith, Director of Product Management for StrataScale and discussed their IronScale product. Their announcement is here.

This is an interesting extension of service from Raging Wire collocation services to host a virtualized infrastructure.

In many ways the Stratascale offering is a greener data center solution for companies with 50 – 500 employees who run their own servers on site or even a collocation offering. Why? Because the solution is designed with Virtualization as an assumption. The good thing is versus services like Amazon Web Services you can also choose to have a no virtualization and be direct on hardware. Also, the servers are not share with other customers. The virtualized servers are all yours.

This was my first chat with Reed, but I am sure I’ll be talking to him again to discuss Stratascale’s solution.

Part of Stratascale’s value is its UI for system provisioning.

You can watch a demo here.

The physical servers are listed.

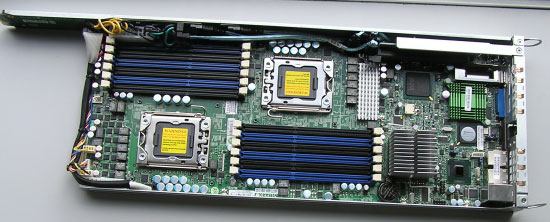

Available in 3 levels of automated integrated bundled environments, IronScale is built on real, physical dual- and quad-core x86 servers.

Level 1 Server...2 cores, 4GB RAM, 70GB storage

Level 2 Server...4 cores, 8GB RAM, 70GB storage

Level 3 Server...8 cores, 16GB RAM, 70GB storage

Each server bundle features:

Dual-/quad-core Intel® Xeon® CPUs

70GB of local RAID 5O storage

Your choice of a Red Hat® Linux® or Windows® Server OS

1 Mbps dedicated bandwidth

2 networks and 8 external IP addresses per client

100 internal IP addresses per network

VPN for 1 site-to-site and 5 remote users per client

24x7 Monitoring and Management

KVM access

The data center facility is listed is run by Raging Wire.

Our Tier IV Data Center

Staff

Multi-disciplined, certified engineering staff and 24x7 support team of IT experts is rigorously trained, has extensive industry expertise, and is committed to clients, standards, and best practices.

World-Class Facility

Tier IV class "A+" 200,000+ square foot data center

Engineered for 99.999% availability

Power and cooling is scalable beyond 200 Watts per sq. ft.

Carrier neutral high-speed Internet, over 20 Gigabits of bandwidth

N+2 minimum system and component redundancy for concurrent maintainance and fault tolerance

On-site, 69Kv power substation and well

Financial-grade physical security

Their PUE is not advertised, but given the highly virtualized environment the performance per watt should be high.

Environmental Responsibility

At RES, we have a healthy respect for our environment. Which is why we have always built common sense and green practices into everything we do. But being environmentally responsible isn't just good for the community and the planet - its good business. For our efforts, we have received numerous environmental awards, but we don't stop there. We constantly refine our processes to improve our power and resource efficiency, reduce and recycle wastes, and help us to operate more productively.

Efficiency and sustainability is key to everything we do.

Our power conservation efforts have saved more than 4,000,000 kWh of electricity.

We've increased our chilled water plant and cooling efficiency, conserving an additional 250,000 kWh of electricity per month.

Our two chemical-free water treatment systems have eliminated chemical use entirely and reduced our water discharged by 80%.

We recycle 100% of the cardboard, steel, copper, and aluminum we use for construction and other activities. In paper and cardboard recycling alone, it is equivalent to 36+ mature trees per year.

We recycle 100% of our lead acid batteries, over 700,000 pounds worth, and donate the proceeds to the United States Wolf Refuge.

We recycle more than 2,000 pounds of electronic waste per year, from both our own company and as a free service to our valued clients.

Our social and environmental responsibility is an essential part of contributing to and protecting the community we live in.