I was talking to a Sr Google guy at a conference and asked what he does. His response was "I work on Google's Infrastructure."

What is infrastructure as defined by searchdatacenter?

- In information technology and on the Internet, infrastructure is the physical hardware used to interconnect computers and users. Infrastructure includes the transmission media, including telephone lines, cable television lines, and satellites and antennas, and also the routers,aggregators, repeaters, and other devices that control transmission paths. Infrastructure also includes the software used to send, receive, and manage the signals that are transmitted.

In some usages, infrastructure refers to interconnecting hardware and software and not to computers and other devices that are interconnected. However, to some information technology users, infrastructure is viewed as everything that supports the flow and processing of information.

Infrastructure companies play a significant part in evolving the Internet, both in terms of where the interrconnections are placed and made accessible and in terms of how much information can be carried how quickly.

But, the Google guy clarified he works on the search and services infrastructure to support Google services. Ohh, this is interesting Google defines infrastructure above what most think.

Which fits with a competitive Google has that GigaOm points out as an infrastructure advantage.

Google’s Growing Infrastructure Advantage

By Stacey Higginbotham Mar. 17, 2010, 7:50am PDT 2 Comments

Google’s content comprises between 6 and 10 percent of global Internet traffic, making its internal network one of the top three ISPs in the world, according to Arbor Networks. The maker of deep packet inspection equipment, which runs a survey of international ISPs, detailed Google’s traffic in a blog post Tuesday.

The original information came from here with details on Google's use of direct peering.

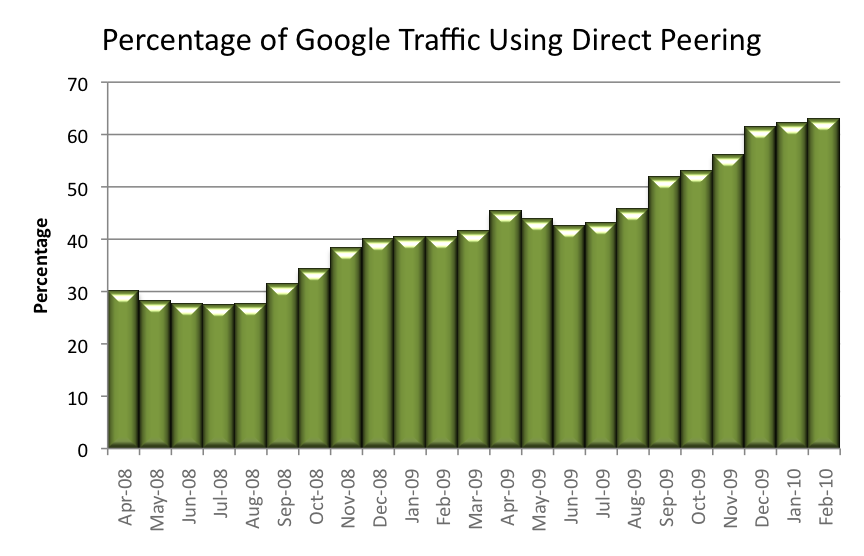

The graph below shows an estimate of the average percentage of Google traffic per month using direct interconnection (i.e. not using a transit provider). As before, this estimate is based on anonymous statistics from 110 providers. In 2007, Google required transit for the majority of their traffic. Today, most Google traffic (more than 60%) flows directly between Google and consumer networks.

So, even though the data center crowd thinks of data centers as infrastructure, Google has a bigger picture.

But even building out millions of square feet of global data center space, turning up hundreds of peering sessions and co-locating at more than 60 public exchanges is not the end of the story.

Over the last year, Google deployed large numbers of Google Global Cache (GGC) servers within consumer networks around the world. Anecdotal discussions with providers, suggests more than half of all large consumer networks in North America and Europe now have a rack or more of GGC servers.

So, after billions of dollars of data center construction, acquisitions, and creation of a global backbone to deliver content to consumer networks, what’s next for Google?

I am regularly surprised how data center discussions many times only discuss the data center, not the data center as part of the overall system.