DatacenterKnowledge reports on Wal-Mart's selection of Colorado Springs for a new data center site.

Wal-Mart Confirms Colorado Springs Project

July 22nd, 2011 : Rich MillerWal-Mart confirmed Thursday that it will build a major corporate data center in Colorado Springs, boosting efforts by local officials to boost the city as a data center destination. Construction costs for the new data center are estimated at $100 million, and initially, the data center would need 20 to 40 full-time employees with annual salaries of $30,000 to $70,000.

The Colorado Springs reports more details coming from the local economic development officials.

City officials say based on their estimates, and using information provided by Wal-Mart, the facility will cost about $100 million to build; the company declined to disclose the cost. Wal-Mart also is expected to invest another $50 million to $100 million in machinery and equipment over the initial 15-year life of the facility, city officials say.

The center will employ about 30 people with salaries of $30,000 to $70,000, Phair said. It will be built on 24 acres Wal-Mart has contracted to buy southeast of InterQuest and Voyager parkways on the city’s far north side; construction is scheduled to begin in October and is expected to be completed in late 2012, Phair said.

Wal-mart following the Fight Club rule in data centers.

In Wal-Mart’s case, “the new data center will have strategic importance to our business and help us serve our customers more effectively,” Rollin Ford, Wal-Mart’s executive vice president and chief information officer, said in a statement. The company declined to be more specific about what operations will take place in the Springs.

Colorado Springs touted how they beat North Carolina.

Among reasons Colorado Springs was chosen, Phair said: low-cost and reliable electricity, since data centers consume vast amounts of power; an available and highly educated work force; and a location that’s largely free from natural disasters. Financial incentives also were a factor, Phair said.

To land the data center, Colorado Springs beat out Charlotte, N.C., which Quimby and White said had offered incentives totaling about $25 million.

Wal-mart has a sustainability goal stated on their sustainability web site.

Sustainability

At Walmart, we know that being an efficient and profitable business and being a good steward of the environment are goals that can work together. Our broad environmental goals at Walmart are simple and straightforward:

- To be supplied 100 percent by renewable energy;

- To create zero waste;

- To sell products that sustain people and the environment.

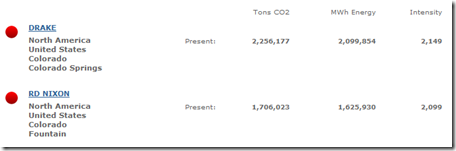

When you look at CARMA.ORG's web site for Colorado Springs power. The carbon impact looks large.

I wonder what Wal-mart's plans are to be supplied by 100 percent renewable energy for its new Colorado Springs data center. Here is a greener hybrid Wal-mart uses.

Walmart Hybrid Assist Truck

Walmart is working with manufacturing partners to develop new technologies to help reduce our environmental footprint, are viable for our business and provide a return on investment. This truck is a hybrid assist, which means the batteries kick in when the truck needs more power (at start up or going up a hill). Freightliner is testing a new location for the batteries – on the back axel, which is designed to put power where it’s needed and mitigate power loss. This truck represents a test for Walmart. We will learn from this vehicle and work with Freightliner to continue to enhance the technology.