I wrote this post for Gigaom on what the Infrastructure of IoT is. My thoughts are it is beyond the typical abilities - scalability, availability, etc. I included part of what I wrote below. For the full text go to the Gigaom post.

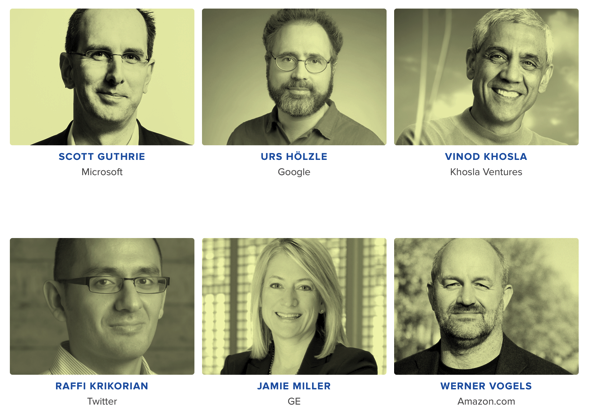

I am moderating panel discussion on the Infrastructure of IoT at Gigaom Structure on June 19. Please join me there or watch the live stream. This event should be one the of best here are the headline speakers.

Here is my post on IoT.

Infrastructure of IoT, beyond availability and scalability

byMAY. 24, 2014 - 12:00 PM PDT

Comment

SUMMARY:To handle the addition of billions more devices — including sensors that talk to each other, not necessarily to us — how must our infrastructure evolve? That’s a big topic on tap for Structure 2014.

Infrastructure is something that people are used to not thinking about. It is normally associated with roads, water, electricity, and telecommunications. Things it takes for a society to function. People just want infrastructure to work when they need it. When roads are being repaired, when the waterline breaks, when the power is out, and the Internet is down — that’s when people pay attention to infrastructure. Most would assume that the Infrastructure for IoT should be the same just like the rest of information technology (IT).

In IT, search, email, finance, social networks are the infrastructure for being connected. When people talk about infrastructure for IT they think of security, availability, scalability, and reliability, as the key capabilities to focus on. Whenever there is a security breach or services go down, teams scramble to remedy the situation. The internet of things is being driven by many of the same technology companies that users are familiar with. Running a Google Search for “IoT” the top three paid advertisers are Microsoft, Cisco, and Intel.

Building IoT Infrastructure the same as other IT Infrastructure

If you take a traditional approach, the IoT is the same infrastructure approach for IT but scaled to work with billions and billions of IoT devices. Servers, network, storage are now at a scale to allow billions of devices to be connected to cloud services. Along with this scale comes millions of failure events, which could be a degradation of device performance or outright failure. One view is users will get another device run setup based on the new device, connect a replacement IoT device, and the old one disappears. Another view is we have the history of your IoT device, we can help you repair it, replace it, or upgrade it. The damaged IoT device is part of a bigger experience and a device failure is an opportunity to build a new and better experience.

Some of you may still think I just want to build highly available, secure, and scalable Infrastructure for IoT, that users will expect it to be no different than their existing IT services. But, I would argue, that we need ore than that. we need IoT infrastructure that does more than compete on availability, security, and scalability. We need infrastructure that provides a sort of institutional memory of what you’ve done with your devices. Where do you think the money is in the infrastructure of IoT? A low-cost infrastructure that quickly gets commoditized or a value added service for the Infrastructure of IoT users will stick with?