I had a chance to interview Sun’s Dean Nelson. Before I could even ask a question Dean started out applauding Microsoft and Google’s efforts to share data center information. Dean continued on that these efforts drive energy efficiency and transparency of what works and what doesn’t.

Given Microsoft and Google’s market presence they are driving awareness and demand for more information. And, I think Sun is one of the benefactors which is why Dean is becoming a data center star, focusing on opening the data center industry to share #’s.

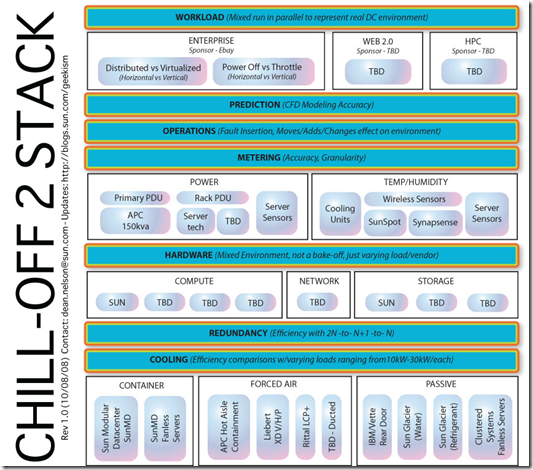

A specific example of Sun’s efforts are the Chill-off where Sun partnered with Silicon Valley Leadership Group and Lawrence Berkeley Labs. This PDF has more info.

Other areas where Dean is working on is www.datacenterpulse.com

Data Center Pulse is an exclusive group of global datacenter owners, operators and users. The goal of this community is to track the pulse of the industry and influence the future of the datacenter through discussion and debate.

And, OpenEco.org

OpenEco.org is a global on-line community that provides free, easy-to-use tools to help participants assess, track, and compare energy performance, share proven best practices to reduce greenhouse gas (GHG) emissions, and encourage sustainable innovation.

One of the other areas Dean and I discussed is the inefficiencies of dev, test, pre-production labs. Here is list of why these labs are inefficient

- Few labs are shared resources across a company, as most are dedicated to specific teams who have accumulate the space and equipment.

- Most of these labs are in office space and have a PUE in 2.5 – 3.0 range, but no one really knows as the HVAC and power systems are part of the overall office space. (Note: as a piece of trivia I was talking to EPA’s Andrew Fanara and he said part of what got the EPA/Energy Star group interested in energy efficient servers & Data Centers is when the EPA would try to categorize power consumption for buildings, but the exception where those buildings that had data centers in them. Those buildings had power usages that made it difficult to quantify what was an energy efficient building.)

- Most labs get the leftover equipment, almost no one puts state of the art equipment in their labs. The exception are a vendor’s demo labs, but these are few.

- The number of unused servers that should be decommissioned is just as bad as production environments. When Sun consolidated its lab space it found 15% of the servers were not being used, but left on from past projects.

- Resource usage is extremely cyclical. What happens to all that equipment, when the product ships and the team takes a vacation. Does anyone turn off the lab?

- Limited lab budgets force lab staff to make due with what they have. Their focus is just get it working. Production issues are to be looked at later.

- No energy monitoring of lab space. This is changing, and I am having fun working with a few companies who are putting systems together, but it is still in the early days.

You add all this up, and one of the most wasteful areas that has the potential largest ROI percentage are these labs. Virtualization has a high % of adoption in lab environmetns, and is an ideal place to start using Cloud Computing Utility type of paradigms. IBM has their offering in Rational Test Lab Manager, so you can look for more vendors to do the same.

It as quick conversation, but hopefully the first of many.

I do agree with Dean that Microsoft and Google are making the industry better for all us, except those people who think Microsoft and Google are the enemy.