Apple and Intel are announcing support for Thunderbolt support in Apple's new Notebooks.

Intel's Light Peak event, Thursday 10 a.m. PT (live blog)

A photo of Intel's Light Peak technology

(Credit: Intel)

Intel today is revealing some of the final details of its Light Peak technology as it makes its way into the first wave of consumer and business gadgetry.

Now officially known as Thunderbolt, the data transfer and high-definition PC connection runs at 10 gigabits per second and "can transfer a full-length HD movie in less than 30 seconds," Intel announced this morning.

Part of Thunderbolt is PCI Express.

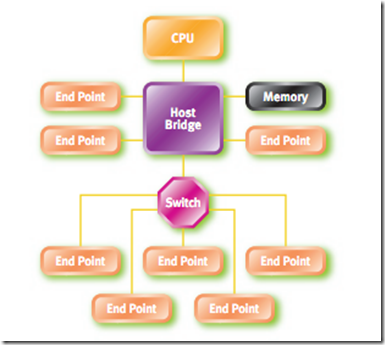

Here is white paper on PCI express. Part of the idea behind PCI Express is adding a switch to IO design.

Given Apple's and Intel's announcement PCI Express interconnects will be cheaper. See this article in HPC for how PCI express Interconnect could be used in high performance clusters.

January 24, 2011

A Case for PCI Express as a High-Performance Cluster Interconnect

Vijay Meduri, PLX Technology

In high-speed computing (HPC), there are a number of significant benefits to simplifying the processor interconnect in rack- and chassis-based servers by designing in PCI Express (PCIe). The PCI-SIG, the group responsible for the conventional PCI and the much-higher-performance PCIe standards, has released three generations of PCIe specifications over the last eight years and is fully expected to continue this progression in the future with even newer generations, from which HPC systems will continue to see newer features, faster data throughput and improved reliability.

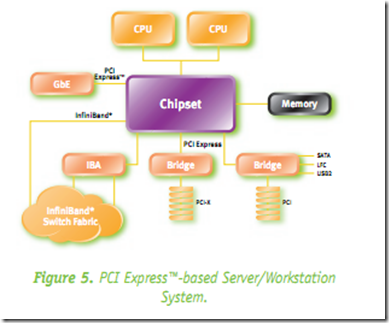

The latest PCIe specification, Gen 3, runs at 8Gbps per serial lane, enabling a 48-lane switch to handle a whopping 96 GBytes/sec. of full duplex peer to peer traffic. Due to the widespread usage of PCI and PCIe in computing, communications and industrial applications, this interconnect technology's ecosystem is widely deployed and its cost efficiencies as a fabric are enormous. The PCIe interconnect, in each of its generations, offers a clean, high-performance interconnect with low-latency and substantial savings in terms of cost and power. The savings are due to its ability to eliminate multiple layers of expensive switches and bridges that previously were needed to blend various standards. This article explains the key features of a PCIe fabric that now make clusters, expansion boxes and shared-I/O applications relatively easy to develop and deploy.

Here is the idea to use PCI Express for cloud infrastructure.

Figure 1 illustrates a typical topology of building out a server cluster today, in which, while the form factors may change, the basic configuration follows a similar pattern. Given the widespread availability of open-source software and off-the-shelf hardware, companies have successfully built large topologies for their internal cloud infrastructure using this architecture.

Figure 1: Typical Data Center I/O interconnectFigure 2 illustrates a server cluster built using a native PCIe fabric. As is evident, the usage of numerous adapters and controllers is significantly reduced and this results in a tremendous reduction in power and cost of the overall platform, while delivering better performance in terms of lower latency and higher throughput.

Figure 2: PCI Express-based Server Cluster