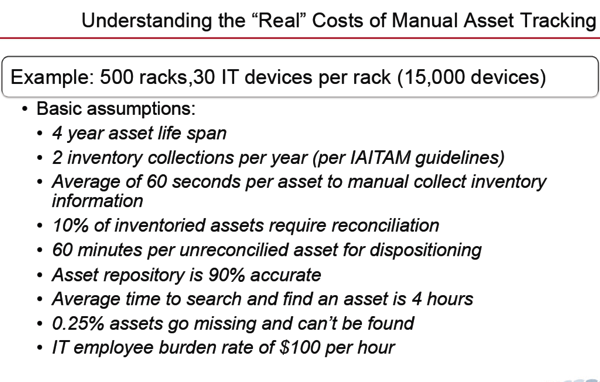

Years and years ago I went to the IAITAM with friends from 3 of the big 5 data center companies (GAFAM) who all work on asset management. IAITAM is the International Association of IT Asset Managers. At the conference I realized that what most of the presentations and users were focused on was how to count assets, record, and report on a regular schedule to align with financial systems. This isn't quite what I think of asset management, but it is a critical part.

A couple of weeks ago I was watching a great presentation by Cheng Lou "on The Spectrum of Abstraction" and Barbara Liskov "The Power of Abstraction"

So what happens if you apply the ideas that Cheng and Barbara shared on asset management? How do you apply abstraction to asset management? Start with abstraction in software engineering. https://en.wikipedia.org/wiki/Abstraction_(software_engineering)

Break the asset management problem. One part is counting things. You could count the number of HP DL380 in an area, but since you are counting DL380s for a depreciation schedule you need to identify individual DL380s not just the total number so you need a unique ID system. Most would apply an asset tag. Maybe a bar code. Thinking they are being more advanced by using RFID tags. The flaw with this method is if you apply the asset tags incorrectly, they fall off, or make data entry error in the original record creation it can be extremely hard to catch the error and you are counting incorrectly for the life of the assets. So let's abstract the asset identification problem to be a virtual asset ID that can automated and is near perfect in its identification method. Oh and make it so there is a REST API to identify an asset programmatically.

If you can do all the above, then change the way assets are counted to be run by microservice. Counting each DL380 uniquely ID in a network means it is in a given space. If you know the network, then you know the location. If you had a perfect accounting of network cables, then you can determine location based on cables and network ports.

Now you may say this is way too hard for your legacy environment which is why people get stuck with asset tags and having people walk down the aisles reading bar codes. The Art of Abstraction can be applied to parts of the problem. if you started on Jan 1, 2017 with all new assets, then at least those can be counted with an abstraction approach. Wouldn't you like to know there are areas of the data center where you have automated asset management? I would.

This is my plan to change asset management with abstraction. I left out of some details because this post would get way too long, but you get the idea.