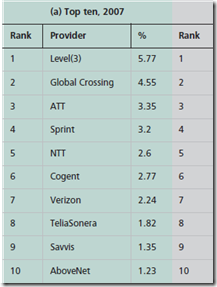

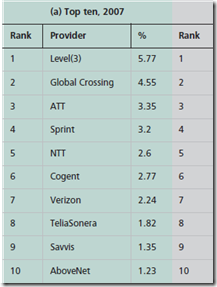

The landscape of Telco is changing. Fast. In 2007 the Internet traffic looked like this.

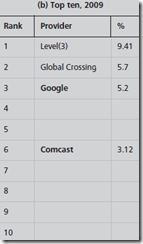

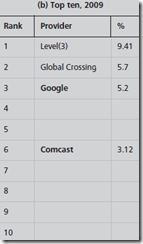

In a Google paper they said hey look at 2009.

In 2011, Facebook and Google are both in the top 10 and growing faster than the rest. Light Reading has an article on this trend.

OSA 2011: Optical World Faces Hipster Challenge

MARCH 8, 2011 | Craig Matsumoto | Post a comment |

LOS ANGELES -- OSA Executive Forum 2011 -- The needs of content providers such as Facebookand Google (Nasdaq: GOOG) are starting to influence roadmaps in optical components and systems, and that's accentuating the differences between telcos and new network owners.

Google makes the case that the traditional Telecom cycle is too slow which reminds me of the old way of product design.

Google's case was particularly spotlighted, partly because the company landed two panel spots (both filled by Senior Network Architect Bikash Koley, due to a colleague's illness). Koley made it clear that he thinks the traditional telecom cycles are too slow for Google and don't produce the right kinds of products anyway.

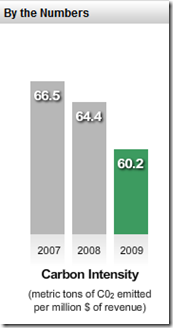

Google makes the point of the power consumption being too big. How many network guys/gals do you know discuss the power of the network? Networks have a big role in a green data center.

That's because standards too often aren't developed with the input of the ultimate buyers, he said. By the time products arrive, they're too big and/or power-hungry to suit the next-generation needs that the user is building for. Koley stepped through an example of how it all goes wrong, drawn not from Google but from his experience at a large equipment vendor. (He didn't specify which one, but his resume includes time at Ciena Corp. (Nasdaq: CIEN))

The users (Google and Facebook) are frustrated.

The problem stems from each vendor taking its cues from a neighboring step in the supply chain, rather than going to the source. The result, in Koley's example: A product that arrived years late. "Not talking to a user gives you the wrong time horizon," Koley said.

And, they are doing something about it.

One alternative is to bypass the standards bodies and develop a multisource agreement (MSA), a tactic that's worked for transceiver modules.

"Any time there's a void and there's enough need, especially if there's enough bandwidth that needs to be deployed, there's a vehicle" thanks to MSAs, said Donn Lee, a senior network engineer at Facebook. (Lee appeared on a panel separate from either of Koley's.)

Need evidence of how this might work? Koley pointed to the recently ratified 10x10 multisource agreement (MSA), which was created with input from a spectrum of companies including not just module suppliers, but also cable operators, telcos, Ethernet service providers and Google.

Here is the 10x10 MSA referred to above.

The 10x10 solutions is designed to meet the needs of users who need to go beyond 100 meters but less than 2 km. Many data centers have link requirements beyond 100 meters, but don’t need to go much more than a few hundred meters. The 10km solutions for these applications is overkill because the 10x10 solution can meet the link requirements at less than half the cost of 100GBASE-LR4 and about 70% of the power (14W for 10x10 vs 20W for 100GBASE-LR4). Since the 10x10 is CFP compatible and can fit in the same port as 100GBASE-LR4, customers will see the benefits of the 10x10 over 100GBASE-LR4 for link distances over 100 meters but under 2 km.

Responding to the call by end-users and equipment manufacturers, the 10X10 MSA is established to deliver the industry’s lowest cost 100GbE solution over single-mode fiber.

The 10X10 MSA is defining a new price and performance trajectory for 100GbE that will significantly accelerate the adoption and economics of 100G deployments.

The 10X10 MSA group is backed by a robust ecosystem of end-users, system manufacturers and optical module suppliers including Google, Brocade, JDSU and Santur.

Member companies include Google, Brocade, MRV, Enablence, Cyoptics, JDSU, AFOP, Santur, Oplink, Hitachi Cable America, AMS-IX, EXFO, Huawei, Kotura, FaceBook, Effdon, Cortina Systems and BTI systems… Read More >>