Google’s Security and Data Protection in a Google Data Center video is viral with 152,000 views. Yesterday when I checked it was at 110,000 since posting on Apr 13, 2011. But, checking the comments started 3 days ago, so most likefly the video has been up on Youtube since April 13, but released Apr 23. Google could not have asked for better timing to be right after AWS outage.

What I found interesting was one article saying the video is related to Facebook’s Open Compute Project.

The media has juxtaposed the data center video with Facebook's Open Compute project, in which the company open sourced its data center hardware and schematics earlier this month.

Facebook's move was an open-source olive branch to the computing community at large, but it was also a calculated play to urge the creation of less expensive, commodity servers.

Google's video tour is an educational play designed to assure enterprises and federal agencies considering a Google Apps collaboration software contract of its stringent data security.

I know Google guys have been thinking of this video for over 6 months to promote the security of data in Google data centers. Has Facebook been thinking about the Open Compute Project for over 6 months?

The Register highlights the hard drive security

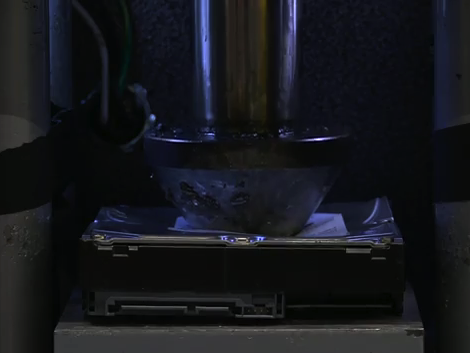

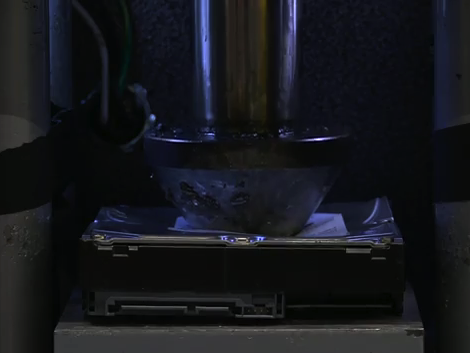

Nonetheless, when a hard drive fails or no longer exhibits prime performance and must be disposed of, Google uses multiple techniques to ensure that the data can't be read at all. It overwrites the data, and then it uses a complete disk read to verify that all data has been removed. When disk reaches the end of its life, Google will then destroy it. This involves pushing a steel piston through the center of the drive and then shredding it into relatively small pieces. The remains of the drives are then sent to recycling centers.

The Crusher: Google gives hard drives the piston treatment

What doesn’t get mentioned that I think is cooler than the low tech ways to handle hard drive security is Google’s shard methods to protect data and achieve scalability, but this is way too geeky for most users to be interested in.

We can hope that with the popularity of the video and news coverage that Google and others will create more data center videos.

Could you imagine a documentary style video of AWS outage? Would the video be a comedy or drama? A video of Sony’s playstation outage would be a tragedy, or comedy if you are from the Xbox Live team.