Google posted a video on its Hamina Data Center.

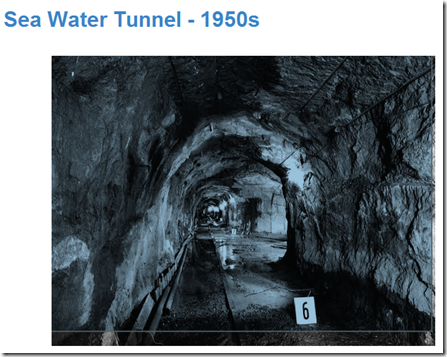

Joe Kava is featured in the videos, but the video I would have really like to see is Joe scuba diving through the sea water tunnel that brings water into the facility. Below is a picture of the tunnel built in 1950.

If you want to see what the facility looked like before check out this tour Google gave to the press.

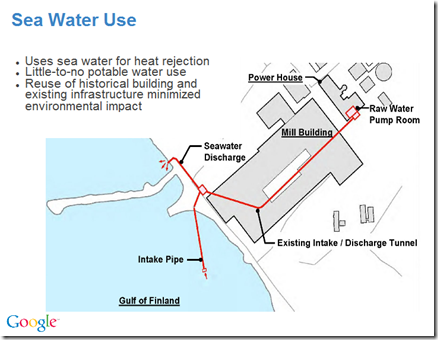

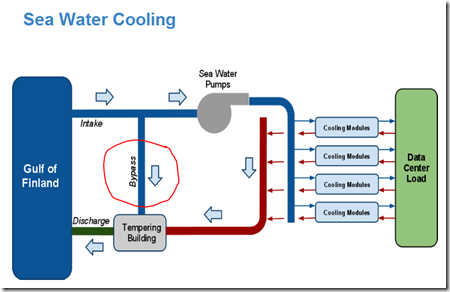

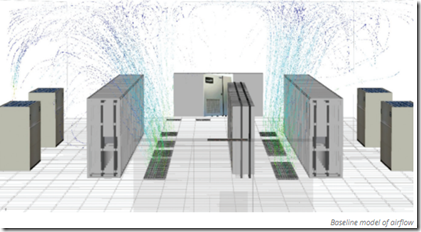

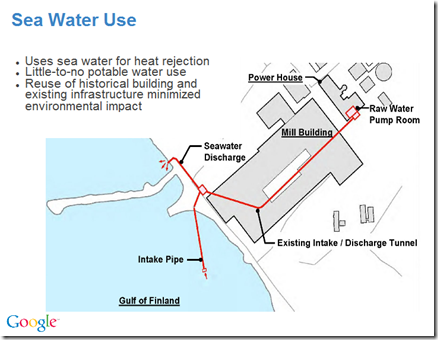

100% sea water use for cooling is a first for the data center industry, and Hamina has some unique characteristics.

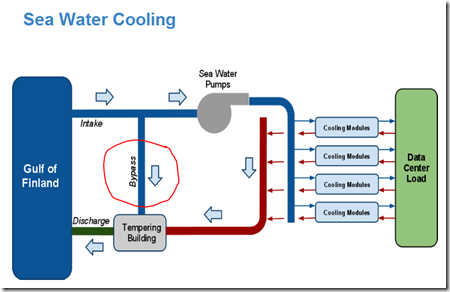

Google added a bypass mixing function to the sea water cooling system to lower the temperature of the discharge back to the gulf which was not a requirement by any government agency. But, Google recognized this change would reduce the environmental impact which fits in a sustainability strategy.

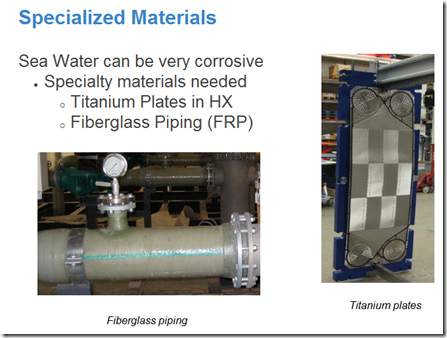

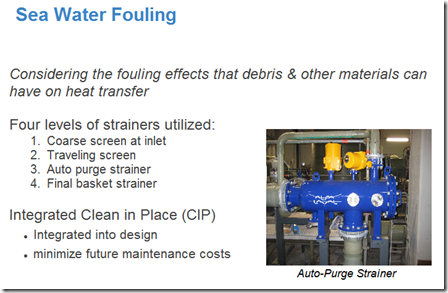

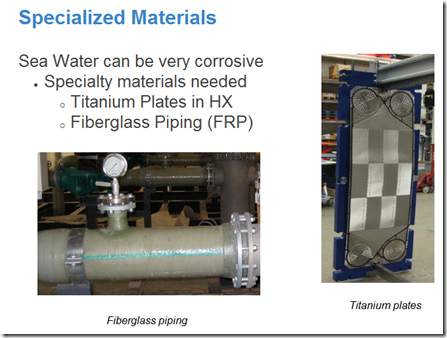

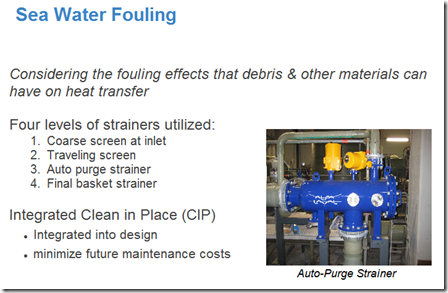

There was a lot of thought required for Google to have a sea water cooling system that runs 24x7 for years and years with no downtime.

I asked Joe some questions on his presentation, and one of the areas we covered is the maintenance issues for a sea water cooling system as a typical assumption at the company who designs sea water cooling systems is there is an annual maintenance that includes downtime. You can imagine the Google guys telling the engineering company, there will be no downtime in this facility.

Below is where Google lists an integrated Clean in Place (CIP) system and other features to address sea water fouling to eliminate maintenance downtime.

37Signals just posted on Ten Lessons for great landscape architecture. You may think landscape architecture doesn’t have anything to do with data center design, but great architecture design is consistent across many areas.

Latest by Naomi Tapia

Frederick Law Olmsted (1822-1903), the father of American landscape architecture, may have more to do with the way America looks than anyone else. Beginning in 1857 with the design of Central Park in New York City, he created designs for thousands of landscapes, including many of the world’s most important parks.

Frederick Law Olmsted (1822-1903), the father of American landscape architecture, may have more to do with the way America looks than anyone else. Beginning in 1857 with the design of Central Park in New York City, he created designs for thousands of landscapes, including many of the world’s most important parks.

His works include Prospect Park in Brooklyn, Boston’s Emerald Necklace, Biltmore Estate in North Carolina, Mount Royal in Montreal, the grounds of the U.S. Capitol and the White House, and Washington Park, Jackson Park and the World’s Columbian Exposition of 1893 in Chicago. (The last of those documented excellently in Erik Larson’s book The Devil in the White City.) Plus, many of the green spaces that define towns and cities across the country are influenced by Olmsted.

Consider Lesson #1 of a great architect.

1) Respect “the genius of a place.”

Olmsted wanted his designs to stay true to the character of their natural surroundings. He referred to “the genius of a place,” a belief that every site has ecologically and spiritually unique qualities. The goal was to “access this genius” and let it infuse all design decisions.

This meant taking advantage of unique characteristics of a site while also acknowledging disadvantages. For example, he was willing to abandon the rainfall-requiring scenery he loved most for landscapes more appropriate to climates he worked in. That meant a separate landscape style for the South while in the dryer, western parts of the country he used a water-conserving style (seen most visibly on the campus of Stanford University, design shown at right).

This meant taking advantage of unique characteristics of a site while also acknowledging disadvantages. For example, he was willing to abandon the rainfall-requiring scenery he loved most for landscapes more appropriate to climates he worked in. That meant a separate landscape style for the South while in the dryer, western parts of the country he used a water-conserving style (seen most visibly on the campus of Stanford University, design shown at right).

Think about these words and watch the video again. Right at the beginning Joe talks about designing for the unique characteristics of a site.

You may think this idea is a waste of time and you don’t have the money, but 30 years from now or a 100 years from now great data center designs, designs that match a site will last and be upgraded. Data Centers that are not designed for “the genius of a place” will fade and be demolished.

This meant taking advantage of unique characteristics of a site while also acknowledging disadvantages. For example, he was willing to abandon the rainfall-requiring scenery he loved most for landscapes more appropriate to climates he worked in. That meant a separate landscape style for the South while in the dryer, western parts of the country he used a water-conserving style (

This meant taking advantage of unique characteristics of a site while also acknowledging disadvantages. For example, he was willing to abandon the rainfall-requiring scenery he loved most for landscapes more appropriate to climates he worked in. That meant a separate landscape style for the South while in the dryer, western parts of the country he used a water-conserving style (