There is a bunch of news on Facebook publishing results on the Tilera Server.

Facebook study shows Tilera processors are four times more energy efficient

Facebook sides with Tilera in the server architecture debate

Facebook: Tilera chips more energy efficient than x86

What I found as most useful is the PDF of the paper that Facebook published.

Many-Core Key-Value Store

Mateusz Berezecki

mateuszb@fb.com

Eitan Frachtenberg

etc@fb.com

Mike Paleczny

mpal@fb.com

Kenneth Steele

Tilera

ken@tilera.comWe show that the throughput, response time, and power

consumption of a high-core-count processor operating at a low

clock rate and very low power consumption can perform well

when compared to a platform using faster but fewer commodity

cores. Specific measurements are made for a key-value store,

Memcached, using a variety of systems based on three different

processors: the 4-core Intel Xeon L5520, 8-core AMD Opteron

6128 HE, and 64-core Tilera TILEPro64.

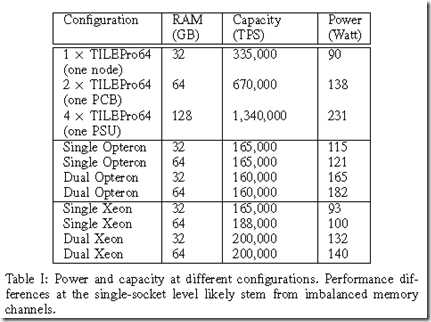

Here is the comparison of the Tilera, AMD, and Intel.

Here is a good tip and reason to think about more than 64 GB of RAM per server for memcache services.

As a comparison basis, we could populate the x86-based

servers with many more DIMMs (up to a theoretical 384GB

in the Opteron’s case, or twice that if using 16GB DIMMs).

But there are two operational limitations that render this

choice impractical. First, the throughput requirement of the

server grows with the amount of data and can easily exceed

the processor or network interface capacity in a single

commodity server. Second, placing this much data in a single

server is risky: all servers fail eventually, and rebuilding the

KV store for so much data, key by key, is prohibitively

slow. So in practice, we rarely place much more than 64GB

of table data in a single failure domain. (In the S2Q case,

CPUs, RAM, BMC, and NICs are independent at the 32GB

level; motherboard are independent and hot-swappable at the

64GB level; and only the PSU is shared among 128GB worth

of data.)

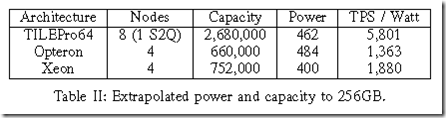

But, if you want to go beyond 64 GB, here are some numbers for a 256 GB RAM configuration.

And Conclusions.

Our experiments show that a tuned version of

Memcached on the 64-core Tilera TILEPro64 can yield at

least 67% higher throughput than low-power x86 servers at

comparable latency. When taking power and node integration

into account as well, a TILEPro64-based S2Q server

with 8 processors handles at least three times as many

transactions per second per Watt as the x86-based servers

with the same memory footprint.

With the server secret of on-chip networking discussed.

The main reasons for this performance are the elimination

or parallelization of serializing bottlenecks using the on-chip

network; and the allocation of different cores to different

functions such as kernel networking stack and application

modules. This technique can be very useful across architectures,

particularly as the number of cores increases. In

our study, the TILEPro64 exhibits near-linear throughput

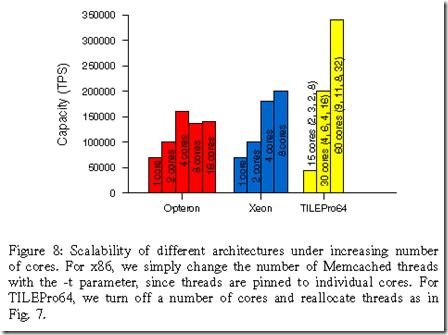

scaling with the number of cores, up to 48 UDP cores.