The NYTimes has an article about Jonathan Koomey's research.

Data Centers’ Power Use Less Than Was Expected

By JOHN MARKOFF

Published: July 31, 2011

SAN FRANCISCO — Data centers’ unquenchable thirst for electricity has been slaked by the global recession and by a combination of new power-saving technologies, according to an independent report on data center power use from 2005 to 2010.

Here is Jonathan's blog post.

My new study of data center electricity use in 2010

I just released my new study on data center electricity use in 2010. I did the research as an exclusive for the New York Times, and John Markoff at the Times wrote an article on it that will appear in the print paper August 1, 2011. You can download the new report here.

I research, consult, and lecture about climate solutions, critical thinking skills, and the environmental effects of information technology.

What are the three reasons Jonathan references as the reason for slower energy use vs. the 2007 study he researched?

- 2008-9 economic crisis

- the increased prevalence of virtualization in data centers

- the industry’s efforts to improve efficiency of these facilities since 2005

My assumption is Jonathan as a numbers guy would put these in order of significance. So, if the economy did not hit the rough spot in 2008 - 9, then the energy growth would be much bigger. The economy was a bigger factor than virtualization, aka the cloud. And virtualization cut more energy than PUE improvements. Although as the NYTimes says it is difficult to break down the numbers, sometimes you need to trust your gut on what feels right and I agree with these assumptions.

Though Mr. Koomey was unable to separate the impact of the recession from that of energy-saving technologies, the decline in use is surprising because data centers, buildings that house racks and racks of computers, have become so central to modern life. They are used to process e-mail, conduct Web searches and handle online shopping as well as banking transactions and corporate sales reports.

But, let's look at who did double, triple, quadruple their data centers from 2005 - 2010.

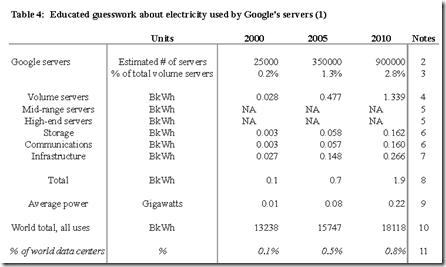

Google almost tripled the number of servers from 2005 - 2010 going from 350k to 900k of servers with an energy use of 0.7 BkWh to 1.9 BkWh.

Facebook launched in Feb 2004 and now has 100,000+ Servers. In 2005 Facebook may have 100 servers, so 1,000 fold increase.

Zynga started in Jan 2007 probably has 50,000+ servers if you count the ones in AWS. Infinite vs 2005

Amazon Web Services launched July 2006 has probably close to 100,000 servers. Infinite vs. 2005.

Jonathan worked on the EPA study for 2007, and he released v2.0 update Aug 2011. I think someone needs to fund his research so he published at least every other year. Infinite vs. 2005.

So, even though the average only moved 56%. What is much more interesting to me is the guys who were far above the average. Too many times people focus on the average as they can't think about the range of the numbers. Dr. Sam Savage has called this the Flaw of Averages.

The error of a single number view vs. the range can be illustrated by the "Flaw of Averages."

The Flaw of Averages

A common cause of bad planning is an error Dr. Savage calls the Flaw of Averages which may be stated as follows: plans based on average assumptions are wrong on average.As a sobering example, consider the state of a drunk, wandering around on a busy highway. His average position is the centerline, so...