Saving energy in the data center is more than a low PUE. Using 100% renewable power while wasting energy is not a good practice. I’ve been meaning to post on what Google and Facebook have done in these areas for a while and have been staring at these open browser tabs for a while.

1st is Google in June 2014 shared its method of turning down the power consumption of a server as low as they could as long as it met performance latency. The Register covered this method.

Google has worked out how to save as much as 20 percent of its data-center electricity bill by reaching deep into the guts of its infrastructure and fiddling with the feverish silicon brains of its chips.

In a paper to be presented next week at the ISCA 2014 computer architecture conference entitled "Towards Energy Proportionality for Large-Scale Latency-Critical Workloads", researchers from Google and Stanford University discuss an experimental system named "PEGASUS" that may save Google vast sums of money by helping it cut its electricity consumption.

The Google paper is here.

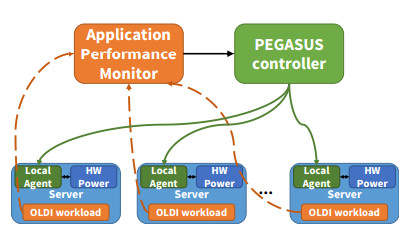

We presented PEGASUS, a feedback-based controller

that implements iso-latency power management policy for

large-scale, latency-critical workloads: it adjusts the powerperformance

settings of servers in a fine-grain manner so that

the overall workload barely meets its latency constraints for user

queries at any load. We demonstrated PEGASUS on a Google

search cluster. We showed that it preserves SLO latency guarantees

and can achieve significant power savings during periods

of low or medium utilization (20% to 40% savings). We also es-

tablished that overall workload latency is a better control signal

for power management compared to CPU utilization. Overall,

iso-latency provides a significant step forward towards the goal

of energy proportionality for one of the challenging classes of

large-scale, low-latency workloads.

Facebook in Aug 2014 shared Autoscale its method of using load balancing to reduce energy consumption. Gigaom covered this idea.

The social networking giant found that when its web servers are idle and not taking user requests, they don’t need that much compute to function, thus they only require a relatively low amount of power. As the servers handle more networking traffic, they need to use more CPU resources, which means they also need to consume more energy.

Interestingly, Facebook found that during relatively quiet periods like midnight, while the servers consumed more energy than they would when left idle, the amount of wattage needed to keep them running was pretty close to what they need when processing a medium amount of traffic during busier hours. This means that it’s actually more efficient for Facebook to have its servers either inactive or running like they would during busier times; the servers just need to have network traffic streamed to them in such a way so that some can be left idle while the others are running at medium capacity.

Facebook posts on Autoscale here.

Overall architecture

In each frontend cluster, Facebook uses custom load balancers to distribute workload to a pool of web servers. Following the implementation of Autoscale, the load balancer now uses an active, or “virtual,” pool of servers, which is essentially a subset of the physical server pool. Autoscale is designed to dynamically adjust the active pool size such that each active server will get at least medium-level CPU utilization regardless of the overall workload level. The servers that aren’t in the active pool don’t receive traffic.

Figure 1: Overall structure of Autoscale

We formulate this as a feedback loop control problem, as shown in Figure 1. The control loop starts with collecting utilization information (CPU, request queue, etc.) from all active servers. Based on this data, the Autoscale controller makes a decision on the optimal active pool size and passes the decision to our load balancers. The load balancers then distribute the workload evenly among the active servers. It repeats this process for the next control cycle.