I got a chance to talk to IBM's Justin Cook, Program Director, System Dynamics for Smarter Cities about IBM's press release for the Smarter Cities Portland project.

IBM and City of Portland Collaborate to Build a Smarter City

Portland, Oregon, USA - 09 Aug 2011: To better understand the dynamic behavior of cities, the City of Portland and IBM (NYSE: IBM) have collaborated to develop an interactive model of the relationships that exist among the city's core systems, including the economy, housing, education, public safety, transportation, healthcare/wellness, government services and utilities. The resulting computer simulation allowed Portland's leaders to see how city systems work together, and in turn identify opportunities to become a smarter city. The model was built to support the development of metrics for the Portland Plan, the City's roadmap for the next 25 years.

I've got friends in Portland, so I appreciate the unique environment Portland has. Here is what IBM discusses as when and why Portland was chose for the Smarter City project.

IBM approached the City of Portland in late 2009, attracted by the City's reputation for pioneering efforts in long-range urban planning. To kick off the project, in April of 2010 IBM facilitated sessions with over 75 Portland-area subject matter experts in a wide variety of fields to learn about system interconnection points in Portland. Later, with help from researchers at Portland State University and systems software company Forio Business Simulations, the City and IBM collected approximately 10 years of historical data from across the city to support the model. The year-long project resulted in a computer model of Portland as an interconnected system that provides planners at the Portland Bureau of Planning and Sustainability with an interactive visual model that allows them to navigate and test changes in the City's systems.

In talking to Justin, I asked him what Tips he had for implementing this complex project. Here are three tips Justin shared with me.

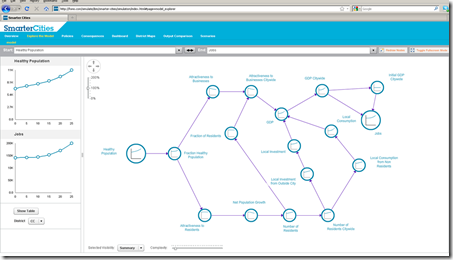

- Discuss the relationships of the groups to understand their perspectives and views. This data will help you understand the semantics of information that helps you build a model. There were 75 subject matter experts and multiple organizations involved in discussing initiatives for Portland's Plan. Below is a view of one dashboard showing various metrics that get you thinking beyond an individual department's view.

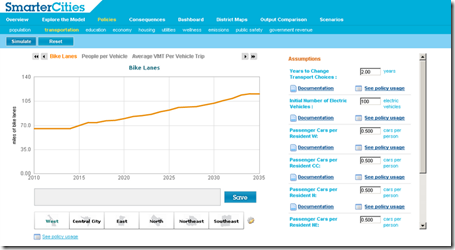

- Assumptions are openly documented to let others know inputs into the models. Below is an example of bike lanes.

- Trade-off between transparency and complexity where a simpler approach is easier to understand, therefore appears more transparent. Justin shared that IBM's system dynamics team had 7,000 questions identified in a smarter city modeling project.

IBM is working with other cities to apply the lessons learned in Portland.

This collaboration with the City of Portland has also proven valuable for IBM. IBM is applying its experience and modeling capabilities developed in this collaboration with the City of Portland to create offerings that will help other cities leverage systems dynamics modeling capabilities to enhance their city strategic planning efforts. Based upon IBM's experience in working with and conducting assessments of cities around the world, they've found that strategic planning in many cities is still being done in stovepipes without a holistic view of impacts/consequences across systems. By leveraging systems dynamics modeling techniques, IBM will be able to help other cities plan "smarter".

In closing Justin and I discussed the potential for projects that affect multiple city metrics and multiple city organizations to see in the model how ideas like more walking & biking lanes can address obesity, getting people out of cars which then reduces the carbon footprint of the city. Bet you didn't think that addressing obesity could fit in a carbon reduction strategy. IBM and Portland see the relationships in this and many other areas.

How valuable is the IBM Smarter City model? We'll see some of the first results from Portland.

Rob Howard is the CTO/founder of enterprise collaboration software company

Rob Howard is the CTO/founder of enterprise collaboration software company