A few months ago I decided to change some ideas I was focusing on. Part of any change normally you would worry about how it affects the traffic to your blog. I purposely don't worry about the traffic as much as writing on things that I find interesting for my research, clients and just plain curiosity. Frequently , I look at the traffic to see what people find interesting.

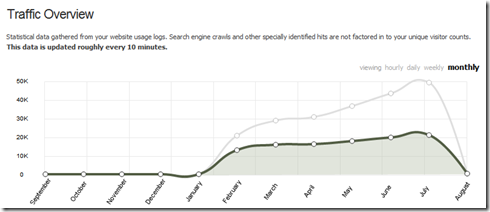

Yesterday I was watching my traffic as July 31 numbers for my blog looked like I would hit 50,000 views. I got so close. Note: I switched from TypePad to SquareSpace hosting in Feb which is why the Sept - Jan numbers are at 0.

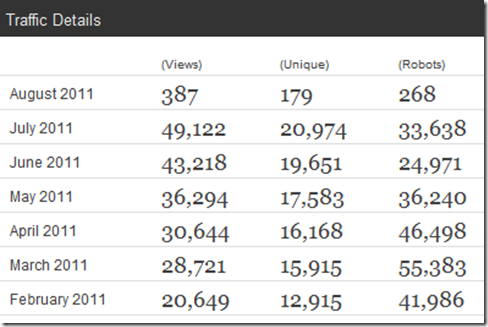

The exact numbers for Feb to July are.

I expect August to be lower traffic with summer holidays for Northern Hemisphere readers. Here are the top 25 cities that hit my blog.

Thanks for visiting The Green (Low Carbon) Data Center blog. I should hit over 50,000 views by end of year.

-Dave Ohara